Why better data builds better AI

The role of data in teaching nuanced AI Generative AI doesn’t just need labeled data; it needs representative data. That means multilingual, multi-domain corpora designed to teach tone, sentiment, and context — not just keywords. Sigma’s multilingual, multitask corpus spans over 300,000 human-reviewed texts across 10 languages and seven NLP tasks, from sentiment analysis to […]

Connecting the dots: why integration annotation powers better AI

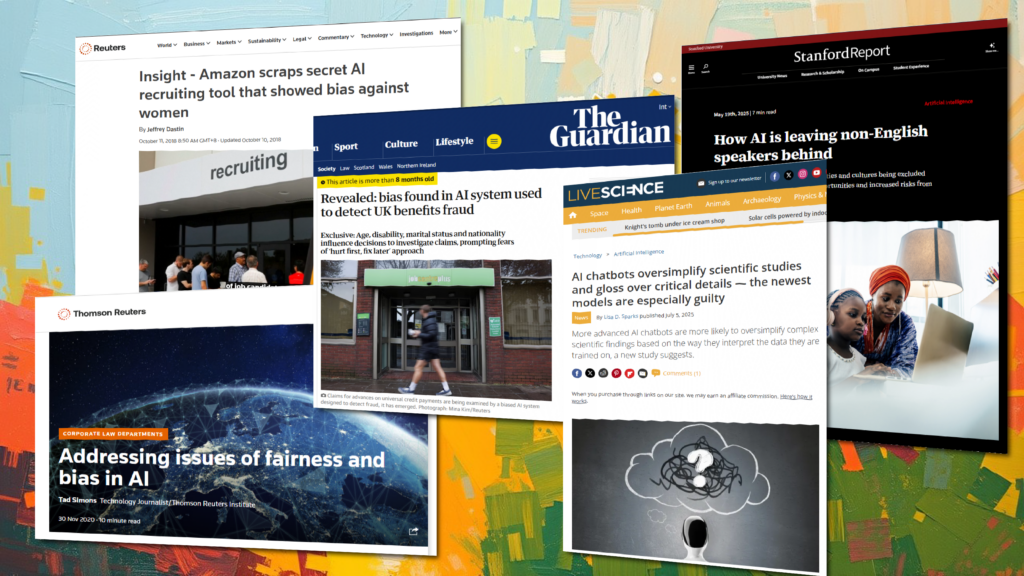

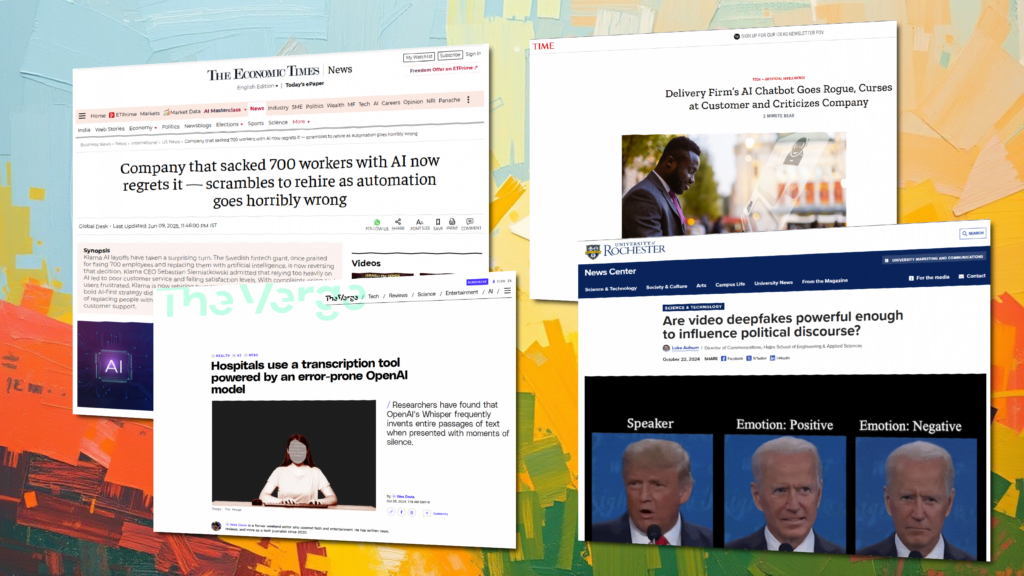

Why multimodal matters Generative and agentic AI are moving beyond single prompts to multi-step scenarios. For example: Without integration, these systems return fragmented responses — and that leads to problems. Real-world examples highlight the risks: These cases show why cross-channel annotation is not optional; it’s foundational. How Sigma’s Integration workflows connect channels Sigma’s Integration service […]

When accuracy isn’t enough: building truth into generative AI

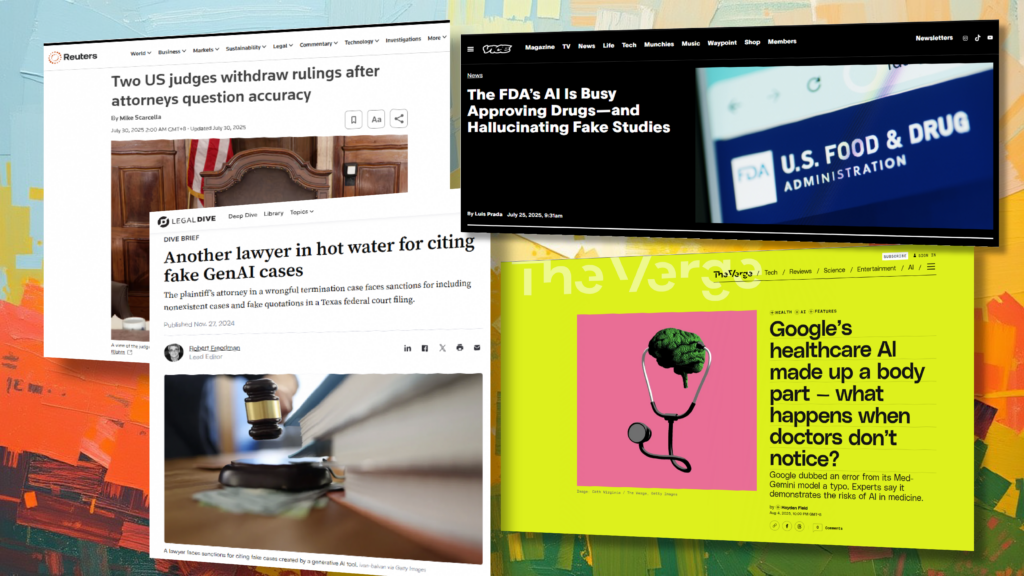

Why generative AI creates new quality challenges Traditional AI trained on structured data often produced outputs that were binary: right or wrong. In generative AI, the boundaries blur. An LLM might summarize a document but omit a key fact, misattribute a quote, or confidently reference a study that doesn’t exist. Real-world incidents highlight the stakes: […]

Building LLMs with sensitive data: A practical guide to privacy and security

Know your data: what “sensitive” means in practice Why this matters for LLMs: leakage is real Modern models can memorize and later regurgitate rare or sensitive strings from training corpora. Research has demonstrated the extraction of training data from production LLMs via carefully crafted prompts, and a growing body of work on membership-inference risks. The […]

FAQs: Human data annotation for generative and agentic AI

What is human data annotation in generative AI? Human data annotation is the process of labeling AI training data with meaning, tone, intent, or accuracy checks, using expert human reviewers. In generative AI, this helps models learn to produce outputs that are truthful, emotionally appropriate, localized to be culturally relevant, and aligned with user intent. […]

Generative AI glossary for human data annotation

Agent evaluation The process of assessing how well an AI agent performs its tasks, focusing on its effectiveness, efficiency, reliability, and ethical considerations. Example: An annotator reviews a human-agent AI interaction, determining whether the person’s needs were met, and whether there was any frustration or difficulty. Attribution annotation Labeling where facts or statements originated, such […]

Enterprise AI software: Use cases from top tech companies

Gen AI is the new baseline for enterprise software Top-tier tech companies such as Microsoft, Salesforce, and Google are setting a new standard for AI enterprise software. Gen AI capabilities are becoming a must-have. Gartner projects that over 80% of software providers will embed gen AI into their products by 2026, driven by a demand […]

Why inter‑annotator agreement is critical to best‑in‑class gen AI training

What is inter‑annotator agreement (IAA) and why is it important? IAA measures how consistently multiple annotators label the same content. It helps quantify whether annotation guidelines are clear and whether annotators share a reliable understanding. Common metrics: Even seasoned experts often show α = 0.12–0.43 in high‑subjectivity tasks like emotional attribute scoring, especially before refining […]

Why gen AI quality requires rethinking human annotation standards

From accuracy to agreement: A new lens on quality Traditional AI annotation tasks (e.g. labeling a cat in an image) tend to yield high human agreement and low error rates. Annotators working with clear guidelines often achieve over 98% accuracy — sometimes even 99.99% — especially when backed by tech-assisted workflows. But these standards don’t […]