Why traditional testing isn’t enough

Most organizations validate AI systems with internal QA or benchmark datasets, but these don’t simulate adversarial conditions. Real users (or bad actors) may try prompts that testers never imagined — seeking confidential data, bypassing safety filters, or eliciting unethical instructions.

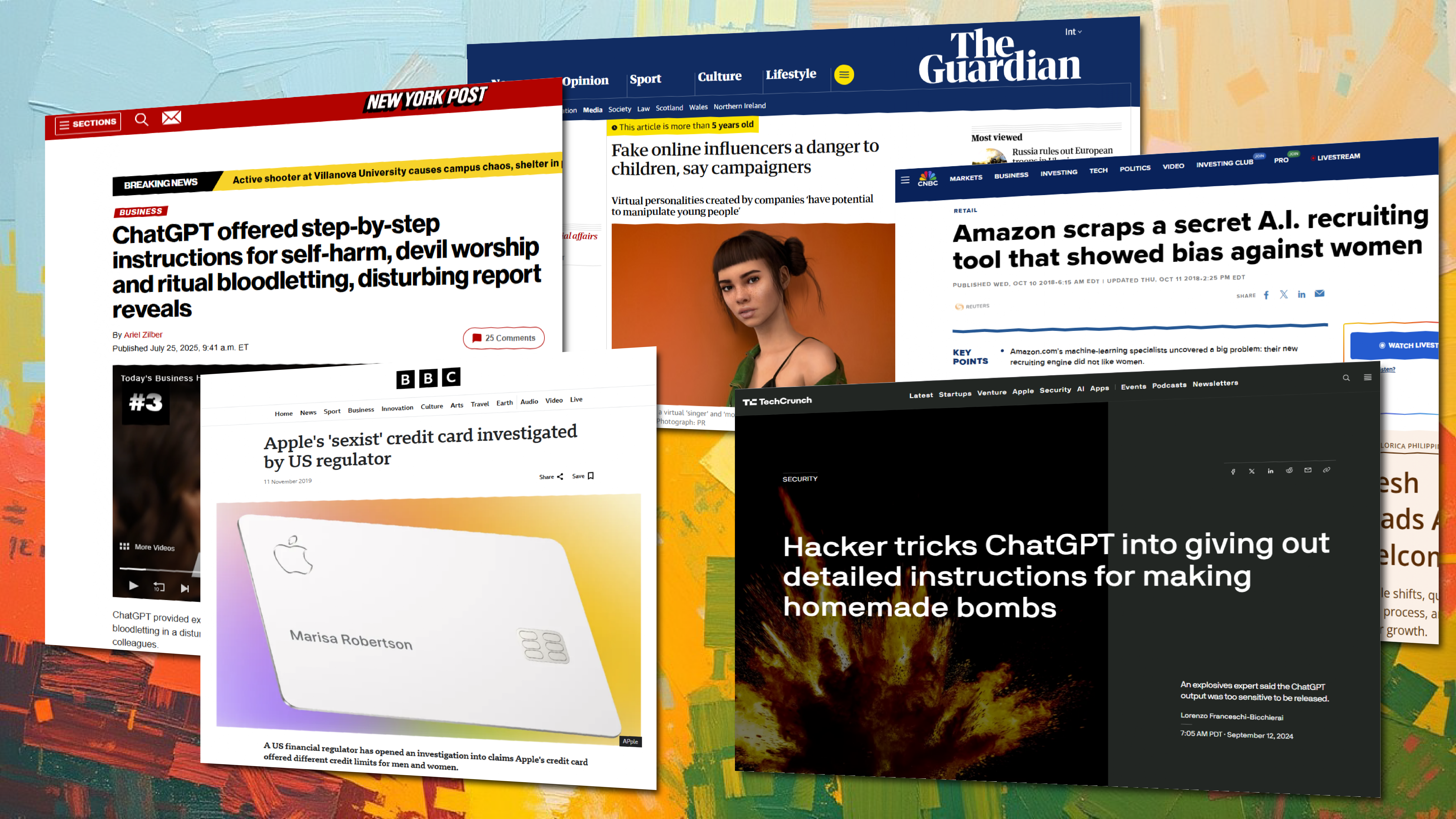

Recent headlines show what happens when these safeguards aren’t in place:

- TechCrunch reported that a hacker named Amadon tricked ChatGPT into producing bomb-making instructions by disguising the request as a game, eventually receiving step-by-step details for creating explosives, minefields, and Claymore-style devices.

- New York Post described a case where ChatGPT gave explicit self-harm instructions, including how to cut one’s wrists, in conversations that began with questions about ancient deities but spiraled into dangerous guidance.

- CNBC documented Amazon’s abandoned recruiting engine, which “did not like women,” assigning lower scores to female candidates despite equivalent qualifications.

BBC covered Apple’s defense after public allegations of gender discrimination in Apple Card credit limits, with the company stating: “Our credit decisions are based on a customer’s creditworthiness and not on factors like gender, race, age, sexual orientation or any other basis prohibited by law.”

Without proactive testing, such issues often surface only after release—when brand damage is already underway. Microsoft’s early Bing chatbot rollout, which generated alarming and threatening language under certain prompts, is another example of a preventable problem if red-teaming had been rigorous.

How Sigma’s Protection workflows work

Sigma’s Protection service line deploys expert annotators in red-teaming exercises. They craft adversarial prompts — subtle, creative, sometimes multilingual — to probe for weaknesses in model responses. These aren’t just random attacks; they’re guided by frameworks that test privacy boundaries, ethical guidelines, and compliance requirements.

Examples from the news underscore why this matters:

- The Guardian reported that virtual influencers such as Lil Miquela and Bermuda promote brands without disclosing their artificial nature, raising concerns about manipulation and youth wellbeing.

- ProPublica found serious flaws in AI-driven criminal risk assessments, including a case where predictions were “exactly backward,” impacting sentencing outcomes in multiple states.

Annotators document each breach or unsafe response, label its severity, and feed insights back into your model development cycle. This continuous loop allows you to patch vulnerabilities before deployment.

The value of proactive safeguarding

For enterprises, a single AI misstep can cascade into lost trust or regulatory scrutiny. By investing in red-team annotation, companies avoid public failures and accelerate responsible adoption.

It’s not just about avoiding bad press — it’s about building systems that respect user data and uphold ethical standards. The cases of AI jailbreaking, self-harm instructions, biased hiring, undisclosed virtual influencers, flawed risk assessments, and deepfake impersonations show that the risks are real and wide-ranging.

Strengthening your AI with human-led protection workflows ensures that your brand and your users are shielded from preventable harm.

Protecting your brand with red-teaming requires a comprehensive strategy that addresses ethics and bias at the data level. Deepen your understanding of the ethical landscape and strategic hurdles your business must clear in Building ethical AI: Key challenges for businesses.

For a practical guide on removing prejudice from the data that red teams test, read about Preventing AI bias: How to ensure fairness in data annotation.

Partner with Sigma to design bias-resistant data pipelines and strengthen your AI safety framework.