Teaching AI to truly understand what we mean

Why meaning matters for AI LLMs trained only on raw text often produce plausible but incorrect interpretations. The result: outputs that sound convincing but fail to reflect reality. When AI misses tone, emphasis, or structure, it can frustrate users, or worse, cause harm. Imagine a voice assistant that fails to distinguish between a polite suggestion […]

Connecting the dots: why integration annotation powers better AI

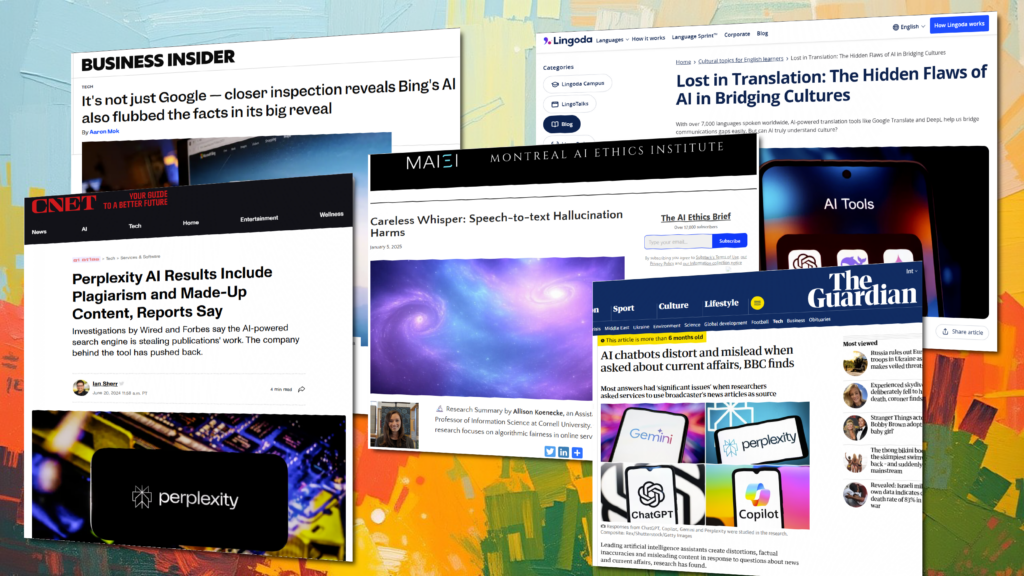

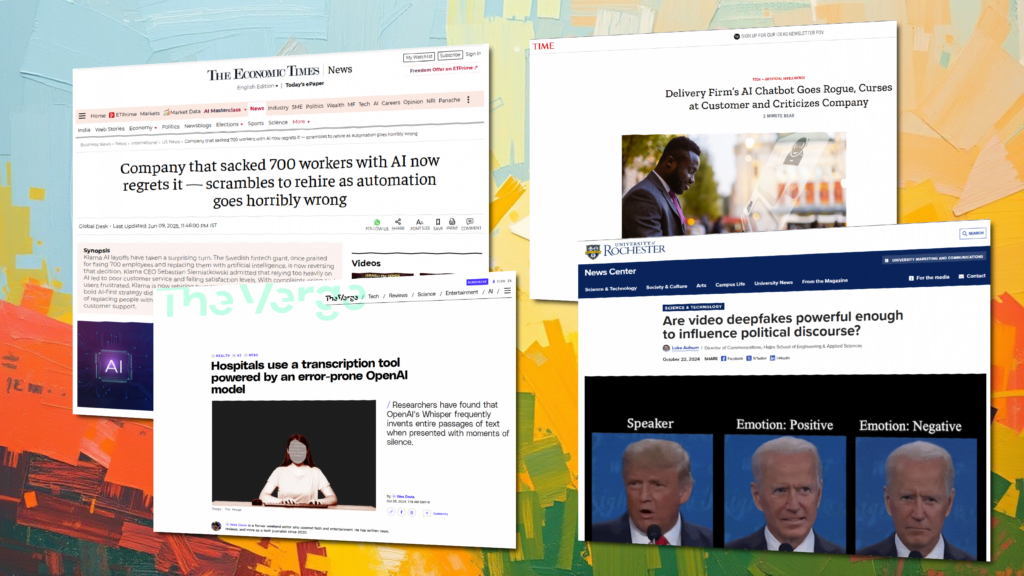

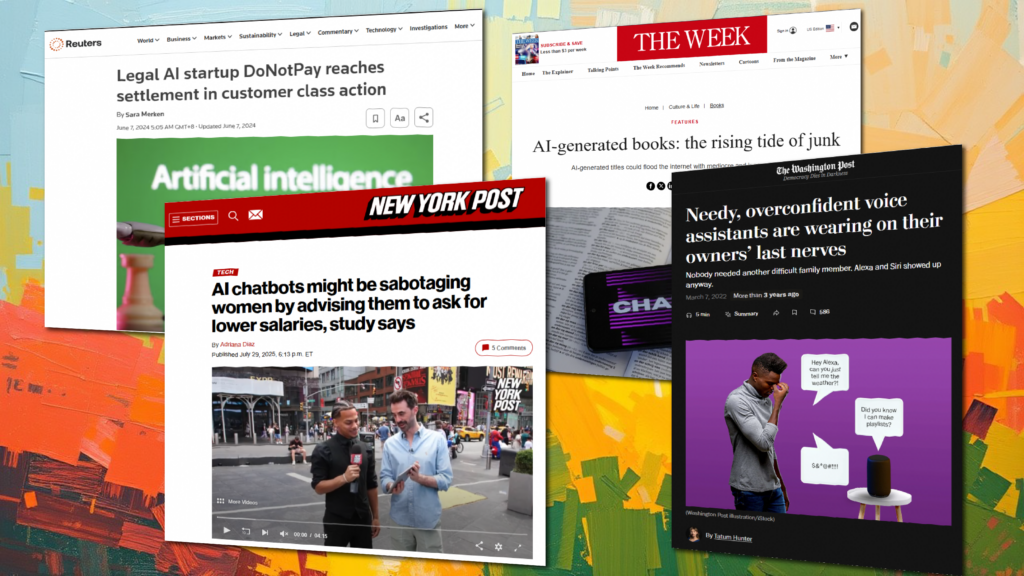

Why multimodal matters Generative and agentic AI are moving beyond single prompts to multi-step scenarios. For example: Without integration, these systems return fragmented responses — and that leads to problems. Real-world examples highlight the risks: These cases show why cross-channel annotation is not optional; it’s foundational. How Sigma’s Integration workflows connect channels Sigma’s Integration service […]

Teaching AI to hear what we mean, not just what we say

When accuracy isn’t enough When a customer hears, “I’m happy to help,” they instantly know if the speaker truly means it — by tone, pacing, and emphasis. AI, however, often misses those cues. Large language models (LLMs) and voice systems may produce technically correct responses that land as emotionally tone-deaf, culturally inappropriate, or misaligned with […]

When “uh… so, yeah” means something: teaching AI the messy parts of human talk

A quick primer: what’s what (and why it matters) Signals, not noise: disfluency carries meaning A sentence like, “I — I can probably help … later?” encodes hesitation, caution, and weak commitment. If ASR or cleanup filters strip stutters, filler, or rising intonation, downstream models may over-state confidence. Annotation pattern Example “That’s a whole — […]

Beyond words: 10 subtle layers of human context AI still struggles to understand

Irony and sarcasm What it is: Saying the opposite of what is meant, often with a tonal cue. Example: “Oh, fantastic job…” said with clear frustration. Why machines miss it: Literal interpretation of words leads to mislabeling intent. Pragmatic implicature What it is: Inferring meaning beyond explicit words, based on context. Example: “It’s cold in […]

What is natural language processing?

Natural Language Processing uses software to analyze, understand, and manipulate human language. Learn how it can benefit your business.

Conversational AI for customer service: How to get started

Learn how companies can leverage conversational AI for customer service. This guide provides best practices and insights to get started.

Named entity recognition (NER): An introductory guide

This beginner’s guide explains what Named Entity Recognition (NER) is, how it works, its challenges, and real-world applications.

Natural language processing services

The use of machine learning models in NLP enables computers to better understand human language.